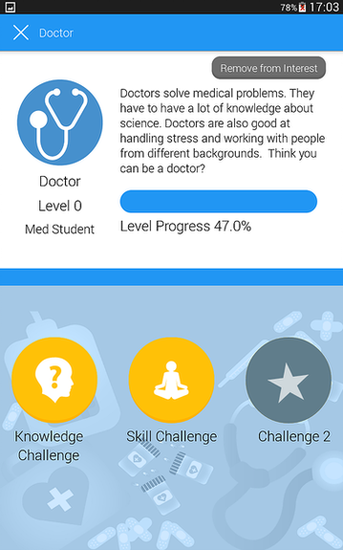

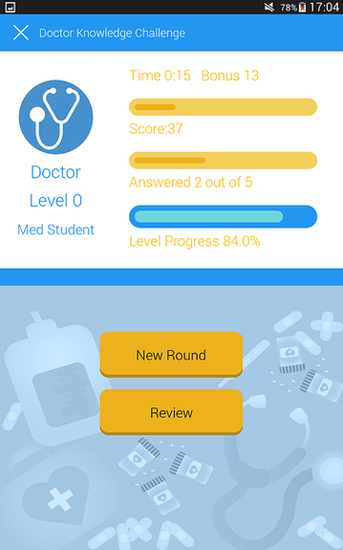

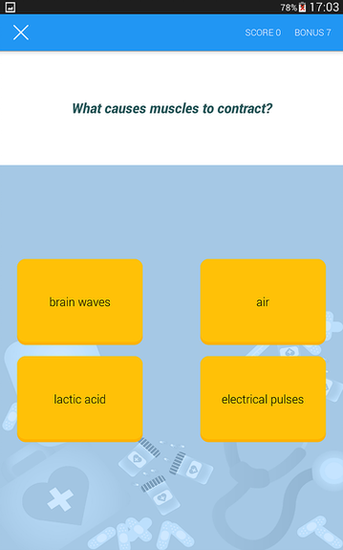

Software engineer with 10+ years specializing in VR/AR and mobile applications. Expert in designing high-performance, immersive user interfaces for Meta Quest, iOS, and Android platforms. Proven track record building engaging experiences that drive user retention and engagement across game-like interactive environments, leveraging modern AI-assisted development tools to accelerate delivery.